By being open and consistent about how AI supports learning materials, an MBA accounting module built trust, encouraged responsible use, and improved engagement with flipped resources—without making transparency feel like a lecture.

Our challenge / opportunity

In NBS8122 Accounting and Finance, a core module taken by all MBA students, I wanted to take advantage of generative AI’s potential to help me produce high-quality learning support materials more efficiently, while also responding to a live sector-wide question: how do we disclose staff AI use to students in a way that is meaningful, honest, and workable? The cohort was entirely international, dominated by students from the Indian subcontinent. They were mature learners with a minimum of three years’ prior work experience and strong English-language capability, typically bilingual and highly career-focused. The module is delivered using a flipped-classroom approach, so the students’ weekly engagement with preparatory resources is central to their learning.

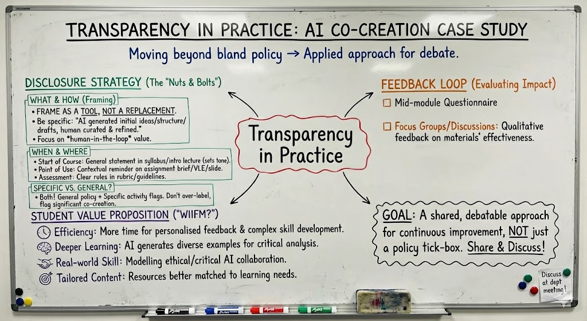

The opportunity, then, was twofold: to scale and enrich the learning resources that underpin a flipped design, and to do so transparently, modelling what good disclosure looks like in practice. The key constraints were consistency of messaging, and pragmatic limits of time and workload. I wanted students to understand what I was doing and why, but I did not want disclosure to become a repeated or distracting theme that overshadowed the accounting and finance learning itself.

Our solution (approach)

The solution began with a clear, up-front disclosure that set a constructive tone. At the start of the module and on the VLE, I explained that over the past year I had been integrating generative AI tools (including interactive chatbots) into support materials, and that I wanted students to understand both the rationale and the responsible-use boundaries. (NBS8122 AI Introduction) I framed the “why” in student-centred terms: AI would help me personalise learning through more examples and targeted support, increase interactivity by enabling follow-up questions outside class time, and offer timely feedback through immediate explanations and guidance. I then established expectations through practical “do’s and don’ts”: AI should supplement, not replace, core learning; outputs must be checked and evaluated critically; academic integrity and appropriate acknowledgement matter; and students should avoid sharing personal data or using AI to bypass fundamentals. This approach aimed to answer the implicit student question, “What’s in it for me?”, while also normalising careful, ethical use.

With that disclosure in place, I introduced three main complementary forms of AI-supported resources aligned to the flipped weekly rhythm. First, I created concise weekly summary videos, around ten minutes each, to support students’ engagement with readings and help them consolidate key ideas quickly; these were produced using HeyGen. Second, I developed AI co-created formative tests in HTML, generated with Gemini, to give students regular opportunities to check understanding and practise concepts independently. Third, I provided an interactive chatbot experience via Google’s NotebookLM, designed to help students explore the material, ask questions, and follow up on points of confusion as they worked through the module content.

A critical feature of the implementation was ownership and quality control. All AI-produced items intended as “core support resources” (such as videos and tests) were personally reviewed by me before release to check accuracy, coherence, and alignment with the module’s aims. By contrast, chatbot use was explicitly positioned as requiring sceptical engagement: students were warned that conversational outputs can be plausible but wrong, and that they should verify details and return to textbooks and module materials. The result was a blended ecosystem of resources that extended support beyond the classroom while keeping academic responsibility and trust at the forefront. Importantly, the work was completed by me alone, making the process replicable for colleagues who may not have dedicated learning-technology support.

The impact (results)

The outcomes suggested that transparent disclosure can sit comfortably alongside meaningful innovation in learning support. Eighteen of twenty-five students responded to a student survey, and the module received an overall rating of 4.5 out of 5. Student Voice was strongly positive about the resource set, including comments such as “Online videos are helpful,” “Useful AI tools and website for financial reports,” and “Excellent Resources.”

Beyond satisfaction, there were encouraging indicators of learning engagement. Seminar interaction remained consistently strong, and students performed well overall in the assignment. An additional, unplanned impact was transfer: students reported adopting some of the introduced tools—particularly Google NotebookLM—in other modules once they had been shown how to use them responsibly and effectively. In other words, the approach not only supported this module’s flipped learning design, but also appeared to increase students’ confidence in navigating AI tools as part of their wider MBA study practices.

Lessons learned

The clearest lesson was that transparency works best when it is purposeful, student-centred, and proportionate. Being explicit and consistent about staff use of AI—without over-emphasising it—helped to build trust and reduced the risk that students would feel AI was being “hidden” or used carelessly. Framing disclosure around learner benefits, paired with clear boundaries on responsible use, made it easier for students to see AI as an aid to learning rather than as a shortcut or a threat to academic standards.

A second lesson was the importance of quality assurance and “taking ownership” of AI outputs. Personally checking AI-produced materials before release felt critical both pedagogically and ethically; it also reinforced the message that AI is a tool within academic expertise, not a substitute for it. Relatedly, it was valuable to differentiate between materials that could be thoroughly vetted (such as videos and structured formative tests) and tools that demand sceptical use (such as chatbots), and to communicate that distinction explicitly.

One surprising outcome was just how strongly students valued the weekly ten-minute summary videos. That response suggests that, in a demanding programme with busy, career-focused students, concise, well-targeted recap materials can be disproportionately impactful—especially when they support a flipped approach that depends on regular preparation.

If repeating the initiative, I would build in small follow-up focus groups to gather richer evidence about how students used the tools, what study behaviours changed, and which parts of the transparency message mattered most. The main limitations to note are that the cohort was small, mature, international, and highly career-oriented, so the approach may not translate directly to large undergraduate settings without adaptation, and different tool types may require different levels of disclosure and different safeguards. Even with those limitations, the experience offers a practical, lightweight model for colleagues: start with a clear rationale, make expectations explicit, keep the message consistent, and pair innovation with visible academic responsibility.

Tips for colleagues

Start by making transparency purposeful rather than performative. A short, student-centred statement early in the module—explaining what you are using AI for, why it benefits learning, and how students should engage critically—sets expectations without repeatedly interrupting teaching. Keep the message consistent by using the same wording across your first session and the VLE, and link it to academic integrity and good scholarly habits so it feels like part of learning rather than an add-on.

When adapting the approach, begin small and choose one or two resource type that matches your teaching design. In a flipped or blended module, weekly recap videos or low-stakes formative quizzes tend to produce quick gains because they support preparation and retrieval practice. Whatever you generate with AI, take visible ownership through a simple quality assurance routine: personally check accuracy, align language and examples to your module, and be explicit about what has been vetted versus what students should treat sceptically. Chatbots can be valuable for “anytime questions,” but they work best when you clearly frame them as exploratory tools and remind students to verify outputs against core readings and lecture materials.

Skills and attributes

Students were able to develop the following attributes:

Education for Life Strategy

This case study reflects the following aims of the Education for Life strategy:

- Fit for the future: To ensure our students are fit for their future, our teaching is fit for the future of our offer, and our colleagues are fit for the future of HE

Authors

|

Dr David Grundy Director of Digital Education Newcastle University Business School |